You quickly scribble a sentence and submit it to your AI tool. What is returned is technically a response, but not quite the right one. The output was off-brand, too generic, or just missed the point enough to warrant another round of editing. Does it sound like a situation that you have encountered? You are not the only one. Actually, the result is not the AI’s problem. It is the prompt’s problem. This is where understanding what prompt engineering in AI is becomes critical. And this is not just a mere operational detail; it is the single most important factor that determines the commercial value of every generative AI investment your company has made.

This is exactly why prompt expansion companies, which used to be a niche technical service, are now becoming a real strategic priority for marketing leaders, CTOs, and AI-forward enterprise teams.

When the quality of the output of an AI model has a direct influence on the turnover, the speed of content production, customer experience, and compliance, the development of the input becomes a top priority. This post explains everything about: what prompt expansion means, what AI prompt engineering services truly provide, which industries are most benefiting from them, and how to select the prompt expansion company that will be the best fit for your organization. Let’s dive right in.

- What Is Prompt Engineering in AI?

- What Is a Prompt Expansion Company?

- Industry Benchmarks: The Business Case for Prompt Quality

- What Is Prompt Expansion? A Clear Definition

- Core AI Prompt Engineering Services: A Full Breakdown

- Prompt Expansion vs. Standard Approaches: A Performance Comparison

- Who Needs Prompt Expansion Services? Industry Use Cases

- The Economics of Prompt Optimization: Why This Investment Pays for Itself

- Prompt Engineering Frameworks: How the Best Companies Structure Their Approach

- How to Evaluate and Select a Prompt Expansion Company

- Real-World Results: Prompt Expansion in Action

- The Future of Prompt Engineering: What Forward-Looking Organizations Are Preparing For

- Conclusion

- FAQ’s

What Is Prompt Engineering in AI?

Prompt engineering in AI refers to the practice of creating well-designed inputs that direct AI systems towards producing precise, appropriate, and contextually aware results. This technique consists of expressing the purpose, providing background, imposing limitations, and instructing the desired output style, which helps in significantly enhancing both the quality and the reliability of the outputs generated by AI.

Prompt engineering in AI, also known as AI prompt engineering, is the practice of designing structured inputs that guide AI models to generate accurate and relevant outputs.

What Is a Prompt Expansion Company?

A prompt expansion company is a service that changes short, incomplete, or unclear AI inputs into detailed and structured layered prompts that always make large language models (LLMs) produce top-quality results. Imagine each LLM GPT-4 Claude Gemini, Llama, or any model suitable for business is like a very powerful engine. However, even the best engine cannot do well with poor-quality fuel. Thus, a vague prompt is a bad type of fuel. A prompt that is well-detailed with context is more like a premium grade of fuel. Prompt expansion companies offer skills, methods, and infrastructure to make sure that every AI interaction in your organization gets the best quality input. Besides just writing a better sentence, these companies develop prompt libraries, governance frameworks, version control structures, and performance benchmarking tools to make the quality of the AI output repeatable and scalable across different departments, locations, and situations.

The quality of your AI output is directly proportional to the quality of your prompt. Everything else is a variable you can control.

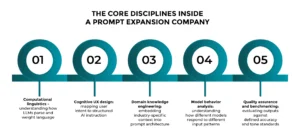

The Core Disciplines Inside a Prompt Expansion Company

Top-tier prompt expansion services collaborate with a team of experts from various fields at the same time:

- Computational linguistics – understanding how LLMs parse and weight language

- Cognitive UX design – mapping user intent to structured AI instruction

- Domain knowledge engineering – embedding industry-specific context into prompt architecture

- Model behavior analysis – understanding how different models respond to different input patterns

- Quality assurance and benchmarking – evaluating outputs against defined accuracy and tone standards

When these disciplines are combined within one engagement, hybrid prompt engineering leads to a prompt framework that is able not only to enhance a single query but to lift all AI interactions within an organization at the same time.

Industry Benchmarks: The Business Case for Prompt Quality

| 40% | Improvement in AI output accuracy with structured prompt design | McKinsey Global AI Survey, 2024 |

| 2.3x | Organizations with prompt ops achieve AI ROI faster than those without | IDC AI Adoption Index, 2024 |

| 60% | Reduction in AI output revision cycles through prompt optimization | Forrester Research, 2024 |

| $1.3T | Global generative AI market projected value by 2030 | Bloomberg Intelligence, 2024 |

| 78% | Enterprise AI leaders cite prompt quality as the top value driver | Stanford HAI, 2024 |

| 300% | Content production volume has increased since the mainstream adoption of generative AI. | HubSpot Marketing Trends Report, 2024 |

What Is Prompt Expansion? A Clear Definition

Prompt expansion is methodically developing a simple user input into a comprehensive, highly limited, context-sensitive set of instructions that result in a generative AI model producing the best output for a specific task.

The process involves several distinct layers:

- Intent analysis: figuring out the real requirements of a user, not simply based on the exact words used

- Contextual enrichment: supplementing with industry background, audience profiling, and development of the scenario

- Constraint mapping: determining the AI’s do-nots, such as content going off the topic, wrong tones, and incorrect formats

- Output specification: indicating the structure length, h tone, and style of the response expected

- Role and persona definition: directing the model to give a response as a particular expert

- Example anchoring: setting reference samples that determine the level of the model’s output quality

Here is a concrete illustration of the before-and-after impact:

| Basic Prompt | Expanded Prompt | |

| User Input | “Write a product description for my SaaS tool.” | Write a 120-word product description for [Tool Name], a B2B SaaS platform targeting mid-size marketing teams. Tone: professional, benefit-first. Format: one punchy headline + two short paragraphs. Avoid technical jargon. End with a CTA. Brand voice: confident, not salesy.” |

| Output Quality | Generic, off-brand, requires 3–4 revision rounds | On-brand, accurate, publish-ready in first pass |

| Time Cost | 45–90 mins including revisions | Under 10 minutes, including review |

The difference between those two prompts is not luck. It is engineering. And it is reproducible at scale.

Core AI Prompt Engineering Services: A Full Breakdown

Leading prompt expansion companies offer a structured, interconnected suite of services. Understanding each one helps marketing and enterprise leaders determine exactly which investment delivers the highest immediate return.

1. AI Prompt Design and Architecture

This is where every engagement begins. Prompt design specialists work with your teams to understand workflows, AI use cases, brand requirements, and quality standards. From that foundation, they build prompt frameworks — modular, reusable templates that produce consistent outputs regardless of who within the organization runs them.

Prompt architecture also accounts for model-specific behavior. For example, GPT-4 and Claude react differently to identical instruction wordings. A good prompt design comprises variants tailored to each model, thus your teams won’t have to create a new prompt from scratch every time they move to a different platform.

This service is foundational. Every other AI prompt engineering service builds on the quality of the underlying prompt architecture.

2. Prompt Optimization Services

Existing AI deployments often underperform – not because the model is poor, but because the prompts driving it were written quickly, without a testing methodology, and have never been formally evaluated.

Prompt optimization services address this directly. Specialists audit your current prompt library, identify failure patterns, and systematically improve performance through:

- A/B testing – running multiple prompt variants against the same task to identify the highest-performing version

- Temperature and context window calibration — adjusting model parameters to suit specific output requirements

- Semantic drift analysis — identifying when a prompt produces accurate outputs in some contexts but fails in others

- Regression testing — ensuring optimized prompts maintain performance across model updates and version changes

Forrester’s 2024 research found that organizations using structured prompt optimization reduced AI output revision cycles by 60%. When a marketing team is producing 200 pieces of AI-assisted content per month, a 60% reduction in revision time is a significant productivity gain — not a marginal one.

3. LLM Prompt Engineering for Enterprise Workflows

Large organizations run AI across legal, marketing, HR, customer service, product, and operations — often simultaneously, and often with entirely different quality standards for each department.

An LLM prompt engineering company builds the infrastructure to manage this complexity. That means:

- Centralized prompt libraries accessible across teams and systems

- Role-based access controls ensuring the right prompts reach the right users

- Version control and audit trails for compliance and governance

- Workflow-specific prompt chains for multi-step automated processes

- Integration with CMS, CRM, and data platforms for contextual prompt enrichment

For enterprise organizations, this is not about better individual outputs — it is about making AI quality governance a scalable, auditable, and repeatable operational practice.

4. Generative AI Consulting Services

Before a single prompt gets written, strategic alignment determines whether the investment delivers. Generative AI consulting services provide the roadmap that prevents the most expensive mistake in AI adoption: optimizing the wrong use case.

Generative AI consultants bring three critical deliverables to the table:

- Use case prioritization – determining which AI applications produce the greatest return on investment through an analysis of the current work process and benchmarking against competitors

- Integration architecture – outlining how AI tools can be integrated with existing technology, data infrastructure, and human monitoring workflows

- Governance framework design – outlining procedures for reviewing, approving, and maintaining AI outputs in alignment with brand, legal, and regulatory requirements

Organizations that invest in generative AI consulting before deployment avoid the common pattern of building AI workflows that scale problems rather than solve them. According to Gartner, enterprises with a defined AI governance framework achieve time-to-value 3x faster than those without one.

5. AI Model Optimization Services

Beyond prompts, AI model optimization services address the performance of the underlying AI system itself. This includes:

- Retrieval-Augmented Generation (RAG) configuration — enabling the model to pull relevant context from internal knowledge bases in real time

- Embedding optimization — improving how documents and data are vectorized for semantic search

- Contextual memory management — designing systems that maintain relevant session context across long or multi-step AI interactions

- Fine-tuning advisory — determining when and how custom fine-tuning generates returns beyond what prompt engineering alone can achieve

These services amplify what prompt engineering achieves. When a well-engineered prompt operates within an optimized model environment, the performance gains compound significantly.

6. Prompt Performance Analytics and Reporting

What gets measured gets improved. Top prompt expansion companies provide analytics platforms — or integrate with existing BI tools — to track prompt performance over time.

Key metrics typically tracked include:

- Output accuracy rate — percentage of AI outputs meeting defined quality standards without revision

- First-pass approval rate — how often AI output passes human review on the first attempt

- Revision cycle reduction — average decrease in editing rounds per content piece

- Time-to-publish — elapsed time from AI query to final approved output

- Token efficiency — cost per output, optimized through prompt compression without quality loss

Prompt Expansion vs. Standard Approaches: A Performance Comparison

How does engaging a prompt expansion company compare to other common approaches? The data tells a clear story.

| Approach | Output Accuracy | Revision Cycles | Time-to-Value |

| Ad Hoc AI Prompting | 55–65% | 4–6 rounds | Days to weeks |

| Manual Prompt Engineering | 70–78% | 2–3 rounds | Hours to days |

| Prompt Templates (Internal) | 75–82% | 2–3 rounds | Hours |

| Professional Prompt Expansion | 88–95% | 0–1 rounds | Minutes to hours |

The gap between ad hoc prompting and professional prompt expansion is not incremental — it is structural. Organizations that close that gap build a durable AI quality advantage that compounds over time.

Who Needs Prompt Expansion Services? Industry Use Cases

The straightforward answer: any organization generating AI output at scale. But the impact varies by industry. Here is where the return is highest and most immediate.

1. Marketing and Content Teams

According to HubSpot’s 2024 Marketing Trends Report, the amount of content has increased threefold ever since generative AI tools became widely used. More content is not the problem – teams are currently overwhelmed with AI-generated materials. The real issues are quality, consistency, and maintaining the brand’s voice when producing such large quantities.

AI prompt engineering services help marketing teams build a foundation for creating content that is on brand and highly converting across a variety of formats, such as blog posts, social media copies, email campaigns, product descriptions, landing pages, and advertisements, efficiently and with minimal human interference. From the perspective of marketing executives, the result on a practical level is having a content-generating function that grows with the increase in demand, not with the number of employees.

2. Healthcare and Life Sciences

In the healthcare industry, an unclear or wrong AI result not only wastes resources. It also poses potential exposure to operational and regulatory risks.

Prompt expansion services offer a range of options. The services build prompts that produce outputs that are clinically accurate, compliant, and appropriately cautious for patient communication, clinical documentation, regulatory submission support, and medical content generation. Moreover, prompt frameworks embed regulatory constraints such as FDA guidelines, HIPAA requirements, and clinical terminology standards right into the instruction architecture.

The result is AI that operates within defined safety boundaries by design, not by accident.

3. Financial Services and Legal

These industries require AI outputs that are precise, defensible, and structurally consistent. Contract drafting, compliance documentation, financial report generation, risk assessment summaries, and legal brief preparation all depend on outputs that meet strict professional standards.

An LLM prompt engineering company serving these sectors builds prompts that enforce citation requirements, format compliance, and terminology accuracy — turning AI into a reliable productivity tool rather than a liability risk.

4. E-Commerce and Retail

At scale, product catalog management is an AI problem. Retailers with a 50,000-500,000 SKU range will definitely lose their minds if they try to handwrite or review every product description, SEO title, meta description, and category page. AI handles the volume. Prompt expansion determines whether that volume is useful.

Optimized prompts at first pass can generate highly targeted, SEO-optimized, conversion-oriented product contents that are also very appropriate for the brand, thus doing away with the editorial review bottleneck that consumes huge team resources in the cases of organizations relying on unoptimized AI output.

5. Enterprise SaaS and Technology Companies

Internal knowledge bases, developer documentation, customer support automation, onboarding content, and product marketing copy all benefit from structured prompt engineering. The higher the AI usage volume inside a technology organization, the greater the compounding return on prompt investment.

Prompt quality also has an impact on SaaS companies’ customer-facing AI features, chatbots, in-app assistants, and automated support,t where output consistency results in product experience and retention.

6. Education and Professional Training

Instructional content, assessment generation, personalized learning pathways, and student feedback tools all depend on AI outputs that are accurate, well-grounded in pedagogy, and suitably pitched. Prompt expansion services help education technology companies build AI features that educators and learners trust — because the outputs consistently meet quality standards that generic prompting cannot sustain.

The Economics of Prompt Optimization: Why This Investment Pays for Itself

Let us talk about the actual business case. A mid-size marketing team generating 400 AI-assisted content pieces per month, with an average of three revision rounds each, spends significant human hours on editing work that well-engineered prompts eliminate. At a conservative estimate of 30 minutes of human review per revision round, that is 600 hours per month — or roughly 3.5 full-time equivalent positions — spent correcting outputs that should have been right the first time.

That is not a technology problem. That is a prompt quality problem.

Prompt expansion does not just improve AI quality. It converts revision time back into productive capacity – at scale, every month.

The Gartner 2024 Generative AI Report projects that by 2026, organizations with structured

prompt operations will reduce AI-related rework costs by up to 45%. The IDC 2024 AI Adoption Index found that companies with mature prompt engineering practices achieve AI ROI 2.3x faster than organizations without them.

The compounding effect is equally important. Every improved prompt in a shared library benefits every team member who uses it, every day. The return on a single prompt optimization engagement does not end at month one — it accumulates over the entire lifecycle of that prompt’s deployment.

Calculating Your Prompt Quality ROI

Use this framework to estimate the return from a prompt optimization investment:

| Metric | How to Calculate |

| Monthly revision hours | AI content volume × average revision rounds × average minutes per round |

| Cost of revision time | Monthly revision hours × average hourly cost of content team |

| Projected savings (60% reduction) | Cost of revision time × 0.60 |

| Annual ROI | Projected monthly savings × 12 — cost of prompt optimization engagement |

| Break-even point | Cost of engagement ÷ projected monthly savings (typically 4–8 weeks) |

For most enterprise teams running AI at meaningful volume, this calculation produces a break-even point well under three months. Everything beyond that is operational surplus.

Prompt Engineering Frameworks: How the Best Companies Structure Their Approach

Not all prompt expansion methodologies are created equal. The most effective LLM prompt engineering companies apply structured, documented frameworks that produce consistent results across diverse use cases.

Here are the frameworks that consistently deliver the highest performance:

1. The CCTF Framework: Context, Constraint, Task, Format

This foundational framework ensures every prompt contains four non-negotiable elements:

- Context — who is the AI responding to, and what background does it need?

- Constraint — what must the AI avoid, exclude, or stay within?

- Task — what specific output is required?

- Format — how should the output be structured, and to what length?

The CCTF framework eliminates the most common causes of AI output failure: insufficient context, missing constraints, and ambiguous task definition. When all four elements are present and well-specified, output quality improves dramatically — even before any advanced optimization techniques are applied.

2. Chain-of-Thought Prompting for Complex Tasks

For multi-step reasoning tasks — market analysis, risk assessment, strategic recommendation, or diagnostic workflows — chain-of-thought prompting instructs the model to reason through the problem step by step before delivering a final answer.

This technique produces outputs that are more accurate, more defensible, and more transparent about the reasoning process — which is particularly valuable in regulated industries where AI-generated recommendations need to be explainable and auditable.

3. Role-Anchored Prompting

Assigning the model a definite expert personality, like ‘You are a senior B2B content strategist with 10 years of experience in SaaS marketing, ‘ is a very effective method to help AI outputs become more appropriate, better-toned, and more thoughtful. Role anchoring essentially adjusts the model’s language register, presumed audience knowledge, and stylistic defaults in such a way that they align with the particular output standard that is being aimed for.

This technique is particularly effective in specialized domains where generic outputs fail to meet professional standards.

4. Few-Shot and Example-Anchored Prompting

Providing the model with two to five examples of the desired output within the prompt itself — a technique called few-shot prompting — is one of the most reliable ways to calibrate AI output style, structure, and tone. Rather than describing the desired output in the abstract, example-anchored prompting shows the model exactly what success looks like.

For organizations with existing high-quality content, this technique leverages that existing asset to train prompt behavior — without the cost or complexity of formal fine-tuning.

How to Evaluate and Select a Prompt Expansion Company

The market for AI prompt engineering services is getting larger very fast. However, not all providers will have an equally good method, level of knowledge, or range of service. Use this evaluation framework in the process of looking for a partner.

| Evaluation Criterion | Strong Signal | Warning Signal |

| Model Agnosticism | Documented expertise across GPT, Claude, Gemini, and open-source models | Only references one model or platform |

| Testing Methodology | Uses A/B testing, regression testing, and benchmark scoring | No documented testing process |

| Industry Experience | Case studies in your specific vertical with measurable outcomes | Only generic use case references |

| Governance Infrastructure | Version control, prompt libraries, and access controls | Delivers prompts without a management framework |

| Integration Capability | Connects with CMS, CRM, and existing MarTech stack | Operates in isolation from your systems |

| Measurable Commitments | Commits to specific quality metrics and revision reduction targets | Only offers subjective quality descriptions |

| Ongoing Optimization | Provides prompt performance monitoring and iterative improvement | Delivers one-time output and disengages |

Real-World Results: Prompt Expansion in Action

Frameworks and principles matter. So do results. Here are three documented scenarios illustrating the measurable impact of professional AI prompt engineering services.

1. Global B2B Technology Company: Marketing Content at Scale

A global enterprise technology company faced a significant content production challenge. Fourteen regional marketing teams were each using different AI tools, different prompting approaches, and different brand interpretation standards. The result was inconsistent output quality and a heavy editorial review burden on a small central brand team.

After engaging an AI prompt engineering services provider, the company implemented a centralized prompt library with region-specific localization parameters and brand-voice calibration. Within 90 days:

- Content production time decreased by 55% across all regions

- First-pass brand approval rate improved from 42% to 89%

- The central editorial review team reduced time spent on AI output review by 70%

The investment paid for itself within the first billing quarter.

2. Enterprise Software Company: Customer Support Automation

An enterprise software company implemented a customer support automation system powered by large language models (LLM) using regular AI tools. The results of the first-contact resolution rates were low 61% and customer satisfaction scores of AI handled queries were far behind human agent scores.

A prompt optimization engagement redesigned the support prompt architecture, introducing intent classification layers, constraint sets for escalation triggers, and persona-anchored response frameworks. Within 90 days of deployment:

- First-contact resolution rate improved from 61% to 83%

- Customer satisfaction scores for AI-handled queries matched human agent benchmarks

- Support ticket volume requiring human escalation decreased by 38%

Critically, these results were achieved entirely through prompt-level improvements. No model was changed. No new AI platform was purchased.

3. Major E-Commerce Retailer: Product Catalog at Scale

A leading e-commerce retailer managing over 200,000 SKUs needed to generate and maintain product descriptions, SEO metadata, and category page copy — all at a volume that made manual writing and editing operationally impossible.

AI model optimization services, combined with a prompt expansion framework tailored to product taxonomy, brand voice, and SEO requirements, produced the following outcomes:

- 91% of AI-generated product descriptions met brand standards on first pass, with no revision required.

- Full catalog content refresh completed in 14 days – a task previously estimated at 8 months of manual production.

- Organic search visibility for product pages improved by 34% within six months of deployment.

The Future of Prompt Engineering: What Forward-Looking Organizations Are Preparing For

The field is evolving rapidly. What works well today will need to evolve as AI capabilities expand and enterprise deployments grow more complex. Here is where the most sophisticated prompt expansion companies are already investing.

1. Agentic AI and Multi-Step Prompt Chains

Agentic AI systems, i. e, AI models that independently perform a series of actions to accomplish a goal instead of simply responding to a single query, require quite different prompt architectures. Prompts should be created in such a way that they can be logically linked through several steps, can deal with different conditional outcomes, and can still maintain a coherent context during long workflows.

Organizations deploying AI agents for research, data analysis, content production pipelines, or customer journey automation will need LLM prompt engineering companies that understand agentic system design – not just single-query optimization.

2. Real-Time Adaptive Prompting

Static prompt templates serve consistent use cases well. But the next frontier is dynamic prompt adaptation – systems that adjust prompt content in real time based on user context, session history, behavioral signals, and intent classification.

Marketing teams deploying AI for personalization, customer service teams managing real-time support, and sales teams using AI for live call support all require this capability. Prompt expansion companies are building the middleware infrastructure that enables real-time prompt enrichment at a production scale.

3. Multimodal Prompt Engineering

Generative AI is spreading far beyond text: image generation, video synthesis, audio production, and structured data analysis are examples of how AI is going multimodal. And so prompt engineering should be evolving too, to handle multimodal inputs and outputs. Context, constraint, and specificity – the three pillars of prompt engineering – will remain, but how to implement behaviour will vary greatly between modalities.

Forward-looking AI prompt design specialists are underway creating multimodal prompt frameworks that companies can use across AI text, image, and video tools with unified governance structures.

4. Regulatory and Compliance-Embedded Prompting

New AI output accountability requirements are being designed by the EU AI Act and US AI governance frameworks alongside more sector-specific regulations in healthcare and finance. Prompt expansion service providers who work in regulated industries are developing compliance-embedded prompt architectures – i.e. where regulation-based requirements are not just retrospectively checked in the outputs, but they are the very structural constraints built into every prompt.

For organizations in regulated industries, this represents a critical capability shift: from hoping AI outputs are compliant, to engineering compliance in from the start.

Conclusion

Artificial intelligence is only as valuable as the outputs it produces. And the outputs it produces are only as good as the prompts that drive them. This is not a technical footnote – it is the central operating reality of every AI deployment in every organization running generative AI at scale.

A prompt expansion company is not just a vendor that writes better instructions for a chatbot. It is the partner that determines how much actual business value your AI investments generate — and how consistently that value compounds over time.

Companies that are in the development of AI prompt engineering services today are attracted by the promising infrastructure that will bring them a long-term competitive edge. They aim at reducing the number of revision cycles, speeding up the time-to-publish, increasing the output accuracy, reinforcing the brand consistency, and obtaining marketing improvements in conversion, customer satisfaction, and operational efficiency that can be measured.

Those companies that treat prompts as a second thought will continue to waste human hours on fixing AI outputs that should have been correct the first time.

The choice is straightforward. Invest in the input. The output will take care of itself.

Whether you are increasing a content marketing team, setting up automation of enterprise workflows, creating AI-native products, or using AI to improve customer experience, the quality of your prompts decides the quality of the output.

FAQs

1. What exactly does a prompt expansion company do?

A prompt expansion company takes a simple, often vague input and transforms it into a detailed, structured, context-rich prompt that produces precise AI outputs. The process involves intent analysis, contextual enrichment, constraint mapping, and output formatting — all of which require deep knowledge of LLM behavior. The result is faster workflows, fewer revision cycles, and measurably better AI performance across every team that relies on generative AI.

2. How are prompt expansion services different from standard prompt engineering?

Standard prompt engineering focuses on writing individual prompts well. Prompt expansion services go further – they build repeatable frameworks, automated pipelines, and scalable libraries of optimized prompts tailored to specific use cases, industries, and AI models. There is a difference between creating a single perfect sentence vs. creating a full content operating system. For the enterprise teams, the difference results in consistency, governance, and the ability to scale over the long term.

3. Which industries benefit most from AI prompt engineering services?

Marketing, healthcare, legal, financial services, e-commerce, and enterprise SaaS companies are the main beneficiaries of AI prompt engineering services. These industries are characterized by large-scale production of content, making decisions, or data queries,s all driven by AI, where even minor improvements in the quality of the prompts can lead to major enhancements in speed, accuracy, and ROI. Similarly, companies in regulated sectors get a lot of value from the prompts that incorporate compliance requirements right into the constraints of AI outputs.

4. Can prompt expansion work with any LLM or generative AI platform?

Yes. A company specializing in prompt engineering for professional LLMs develops prompt strategies in a model-agnostic way, i.e., covering GPT-4 Claude Gemini, Llama, and even enterprise models in-house. The fundamental principles of clarity, context, and constraint hold for all major generative AI systems. Each model might require some special adjustment according to its architecture, whereas an excellent prompt development structure can suit any platform without starting from scratch, completely.

5. How do I measure the ROI of prompt optimization services?

Return on investment of prompt optimization services is assessed by evaluating factors such as the quality of outputs through scoring, decrease in the number of revision cycles, time-to-completion measurement, and comparison of cost-per-query before and after optimization. Besides, a lot of companies focus on the downstream KPIs like customer satisfaction, content engagement rates, and lead conversion, as the improvement of these indicators is closely linked with the production of highly accurate and on-brand AI outputs. In order to show the value in the best possible manner, the most dependable method is to define clear baseline metrics before the start of the interaction.

6. Is generative AI consulting the same as AI model optimization services?

Not exactly. Generative AI consulting covers strategy, use case selection, and implementation roadmaps. AI model optimization services focus on improving the performance of a deployed AI system – including fine-tuning, prompt design, retrieval-augmented generation setup, and output evaluation. Most enterprises require both, and top prompt expansion companies provide a holistic approach integrating strategy and technical execution.

7. How long does it take to see results from prompt expansion services?

Generally, businesses usually notice visible output quality enhancements within two to four weeks after the installation of prompt framework optimization. Execution speed is affected by factors such as the intricacy of the use case, the amount of prompting done, and the adequacy of the baseline metric definitions. A fast-win project targeted at just one major workflow, e.g., product descriptions or customer service replies, generally provides the fastest return on investment.

8. What is the difference between prompt expansion and fine-tuning an AI model?

Fine-tuning changes the model’s weights with new custom data, which is very resource-hungry, costly, and time-demanding. Prompt expansion improves outputs by optimizing the instructions given to an existing model – no model modification required. For most enterprise use cases, prompt expansion delivers comparable or superior results at a fraction of the cost and deployment time. Fine-tuning becomes relevant only when the required behavior cannot be achieved through prompt engineering alone.